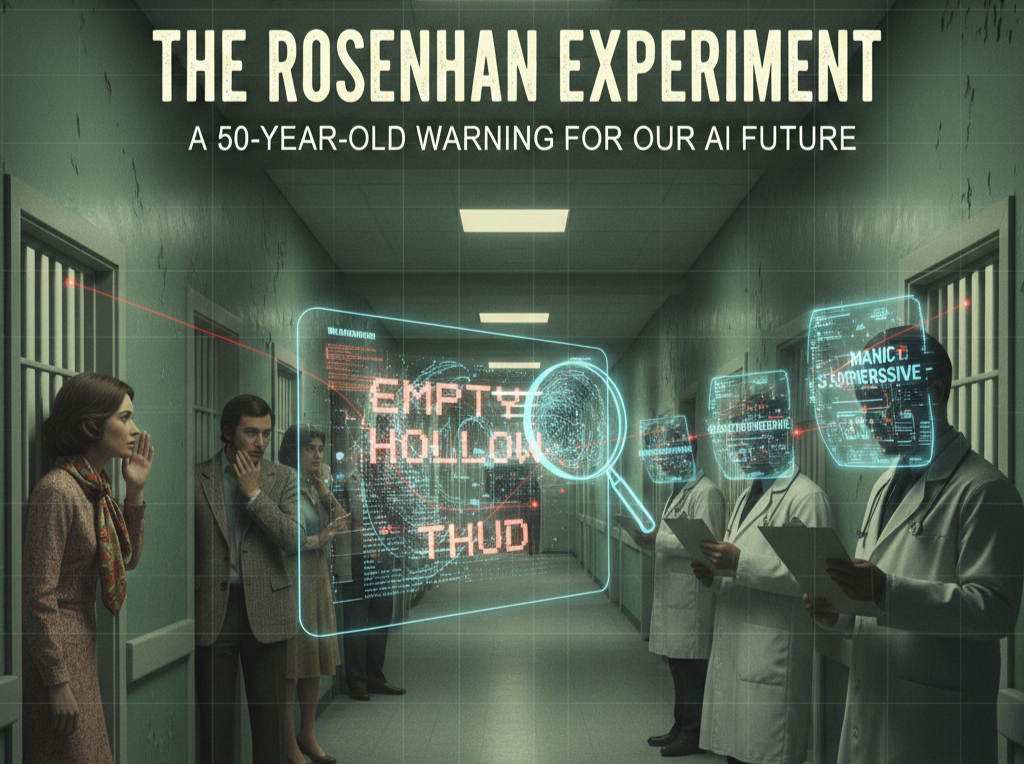

The Rosenhan Experiment: A 50-Year-Old Warning for Our AI Future

Explore my NLP research and published research.

The year was 1973. Psychologist David Rosenhan sent perfectly sane individuals into psychiatric hospitals, instructing them to report just one mild symptom: hearing the words "empty," "hollow," "thud." The results were shocking: all were admitted, diagnosed with severe mental illness, and their every normal action was reinterpreted as a symptom. It took an average of 19 days to be discharged, and ironically, it was the real patients who saw through the facade.

Rosenhan’s study, "On Being Sane in Insane Places," didn't just rattle the psychiatric world; it offers a profound lesson for us today, especially as we navigate the complexities of AI and technology integration.

The "Labeling Bias" Trap:

The core takeaway from Rosenhan's work is the dangerous power of an initial label. Once a diagnosis was made, it created an unshakeable filter through which all subsequent observations were perceived. Doctors, operating within a flawed system, were primed to see illness, even when confronted with health.

Why This Matters for Technology

Literacy and AI: This "labeling bias" is not unique to human perception; it's a critical vulnerability in the systems we build:

Algorithmic Bias: Just as the hospital staff were "programmed" by the initial diagnosis, AI models can inherit and amplify biases present in their training data. If an algorithm is trained on skewed or incomplete data, it will perpetuate and even exacerbate those biases in its outputs – whether it’s in hiring, loan applications, or even medical diagnoses.

Confirmation Bias in Data Interpretation: When we rely too heavily on an AI's initial classification or prediction, we risk falling into the same trap as the clinicians. We might subconsciously seek out data that confirms the AI's "diagnosis" and overlook evidence that contradicts it.

The Need for Human-Centered AI: The Rosenhan Experiment underscores that labels, no matter how sophisticated, should never replace critical human judgment, empathy, and a holistic understanding of context. In AI, this translates to designing systems that are transparent, interpretable, and always subject to human oversight and ethical review. We need to train people to question the data and the labels, not just accept them.

As a Technology Literacy Advocate, my mission is to highlight how understanding human psychology and historical experiments like Rosenhan's is crucial for building responsible and equitable technological futures. We must empower individuals to not just use technology, but to critically evaluate its implications.

Let's learn from the past to build a better future, one where our perceptions—and our algorithms—don't distort reality, but illuminate it more clearly.

Related Articles

The "AI Prompt" Pandemic in Academic Publishing

I’ve been coming across a growing number of published journal articles and technical papers that have one thing in common: The AI's "closing suggestions" were left in the final text.From …

Read More →Compromised ISP Network? Botnet Brute Force and Compromised Infrastructure

I blocked a list of SSH brute-force attackers on my server's firewall, and the entire network lost Internet access. When I rolled back the rules, connectivity was restored. Here is …

Read More →Surviving the 2026 Energy Shock: Why AI Training is the Best "Recession-Proof" Investment

The March 2026 conflict between the US, Israel, and Iran has sent shockwaves through the global economy. As Brent crude climbs and semiconductor supply chains tighten due to military prioritization, …

Read More →Subscribe to Updates

Get notified about new blog posts, AI insights, and digital transformation strategies.

We respect your privacy. Unsubscribe at any time.